Upgrading to VCSA 6 fails

I began an upgrade of the VMware vCenter Server Appliance from 5.5 to 6 for a small (in VMware’s own terminology ‘Tiny’) vSphere environment of 3 hosts and about 30 VMs. I certainly didn’t anticipate any trouble beyond the usual hassles associated with upgrading an infrastructure-level service like vCenter.

Unfortunately, carefully following VMware documented procedures and the best advice some of my favorite blogs had to offer (see: VMware KB 2109772, http://www.vladan.fr/how-to-upgrade-from-vcsa-5-5-to-6-0/, and http://www.virtuallyghetto.com/2015/09/how-to-upgrade-from-vcsa-5-x-6-x-to-vcsa-6-0-update-1.html ), and the upgrade still failed.

When the installer failed, the only message the web-installer had to offer was the well-known: Firstboot script execution error.

Furthermore, the log files failed to download, leaving me with fewer diagnostic resources than I might otherwise find on my Windows desktop.

Normally, the Firstboot script execution error is the result of incorrectly configured DNS, so the first thing I tested was forward and reverse DNS; both were working perfectly.

I decided to delve further into the issue, and found that the SSH daemon on the VM had started, so I connected with Putty for a look around.

Side-note: upgrading from VCSA 5.5 to VCSA 6 requires the creation of a brand-new VM, then the supposed automatic migration of data, leaving you with your original VCSA in a powered-off while a new VM is intended to take its place.

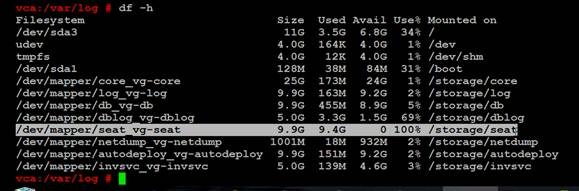

As long as I was in with SSH (Putty), I did some more poking around, and finally ran: df –h on the VM that was supposed to be my new ‘upgraded’, VCSA 6.

The problem was immediately apparent; the /storage/seat partition (virtual disk) was completely full! In VCSA 6, /storage/seat is used for the Postgres Stats Events And Tasks (SEAT).

Side note: VCSA 6 puts all of its primary partitions on separate virtual disks, 11 in total. This is a great advantage for long-term scalability, but somewhat of a disadvantage as compared to one disk where every partition can grow to the capacity of the disk. To learn more about what all of the different partitions/disks do, look at this excellent write-up on virtuallyGhetto: Multiple VMDKs in VCSA 6.0?

What I (and VMware) had failed to take into account in sizing of the VCSA, was the potential for an extraordinary number of Tasks and Events. While this may be a ‘Tiny’ deployment by VMware’s standards, with a Horizon View environment plus Veeam Backup and Replication running on a sub 1-hour R.P.O., the number of Tasks and Events presents more like what VMware seems to expect from a ‘Medium’ deployment.

One potential solution may be to Reclaim or purge data from the Postgres database on the VCSA 5.5 before trying the upgrade; but the owner decided in favor of preserving all of the data if possible.

The Solution

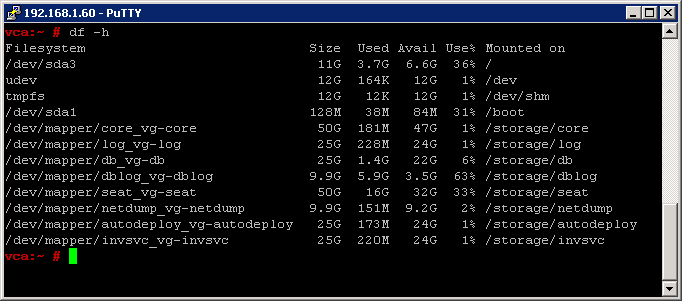

In the end, the solution was simply to select a larger deployment size while going through the web-installer wizard. As it turned out, the /storage/seat disk for a ‘Tiny’ deployment was only 10GB, while it was 50GB for a ‘Medium” deployment.

During the upgrade (which took over 2 hours), I connected via SSH as soon as the daemon had started and ran df –h a number of times (I should’ve used: watch). I saw the /storage/seat volume grow slowly, eventually reaching over 17GB of used space, before settling back to 16GB on the successful upgrade.

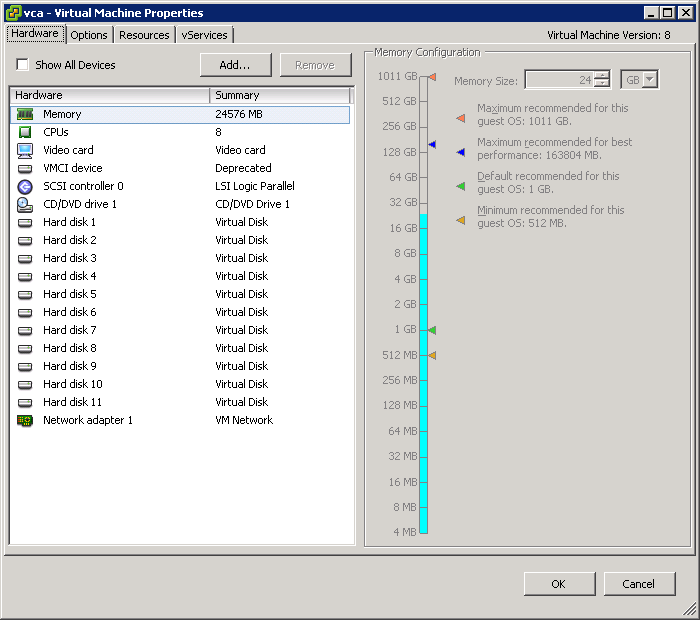

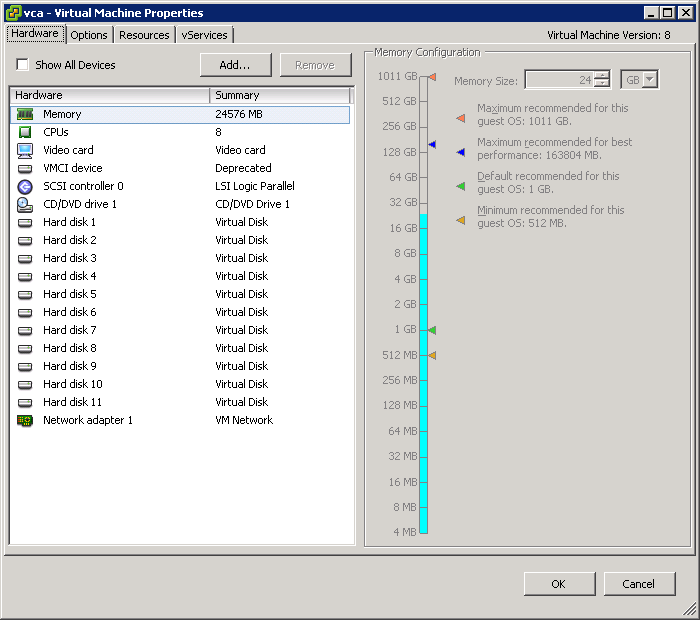

The only drawback I can think of, to having specified a ‘Medium’ size deployment for a relatively small environment is that the vCPU and RAM allocated to the VM are now vastly beyond what is required with 24GB RAM and 8 vCPU. I plan to shut the VCSA down and scale back to around 16GB RAM and maybe 4 vCPU, better to suit the environment at my earliest opportunity.